Async-Parallel-Spezialisten

Markdown anzeigenSpezialisten am Ende produzieren tiefgreifende Artefakte in ihrem eigenen Vokabular; Die alte Organisation nutzte das Ausführungszentrum, um in Echtzeit zu übersetzen. KI kann als asynchrone Übersetzungsschicht zwischen Domänen eingesetzt werden und die meisten Betriebsbesprechungen ersetzen, wenn die zugrunde liegenden Artefakte vertrauenswürdig bleiben.

Die menschliche InversionWichtige Erkenntnisse

- Das Koordinationsproblem besteht nicht in „weniger Besprechungen“, sondern in übergreifenden Grundlagen ohne eine menschliche Mitte, die Live-Übersetzungen durchführt.

- KI als Übersetzungssubstrat ermöglicht es PM, Design und Engineering, tief in nativen Artefakten zu bleiben und gleichzeitig asynchron auf die Implikationen des jeweils anderen zu reagieren.

- Durch die Übersetzung können Einschränkungen stillschweigend aufgehoben werden. Getreue Zusammenfassungen und dauerhafte Artefaktintegrität entscheiden darüber, ob die Kohärenz anhält oder abweicht. – Die meisten operativen Besprechungsarten (Standups, Übergaben, viele Synchronisierungen) schrumpfen strukturell, weil ihre Kopplungsbegründung verschwindet, nicht aufgrund von Richtlinien, sondern weil die Arbeit verschoben wurde.

- Sicherheit, Recht, Marketing und Betriebsabläufe profitieren oft mehr davon, wenn man sie frühzeitig ans Ende bringt, als Produkt, Design und Technik allein, weil diese Funktionen in der Vergangenheit zu spät eingeführt wurden, um Grundlagen und Überprüfung zu schaffen.

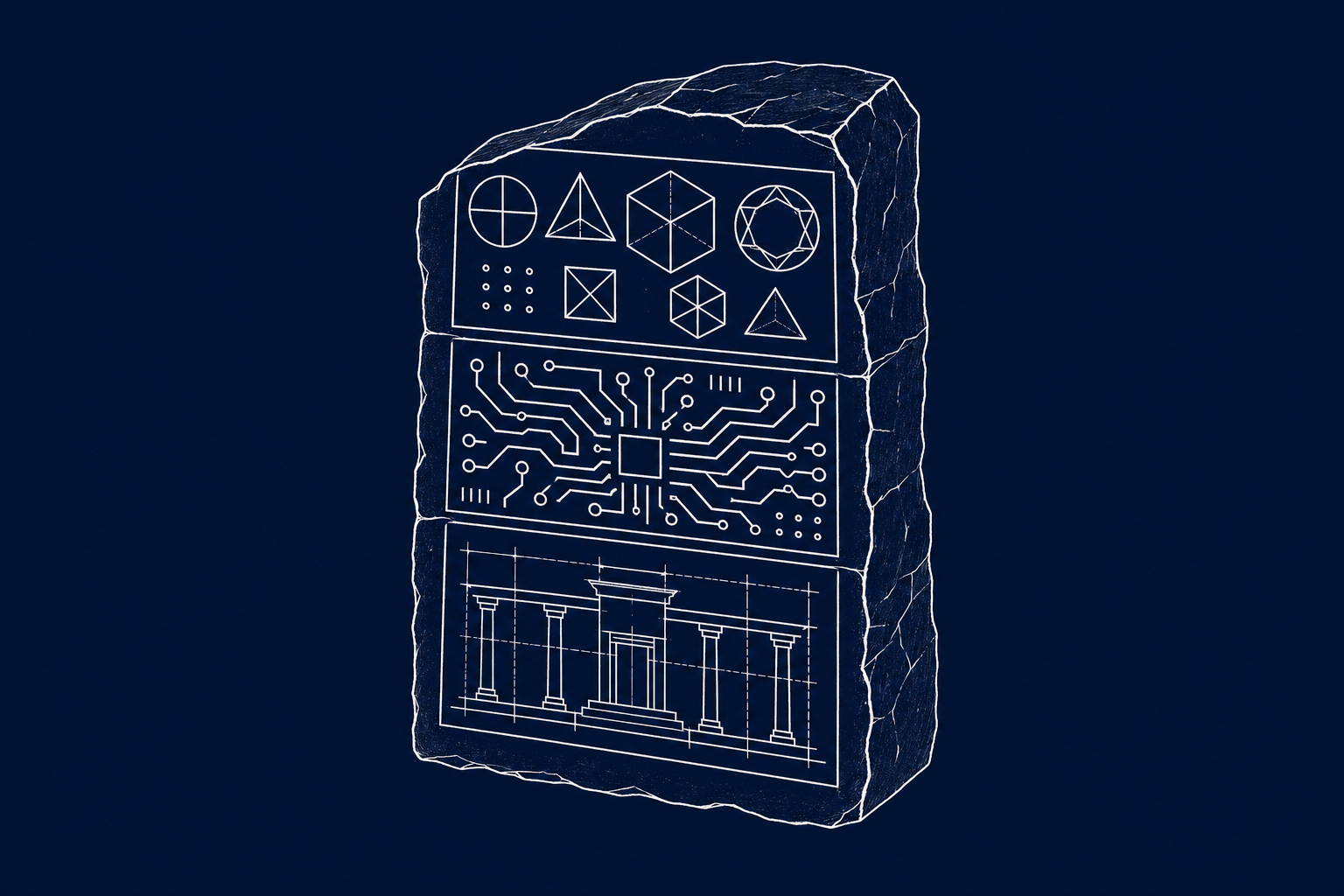

title: "The Human Inversion: Async Parallel Specialists" excerpt: 'Specialists at the ends produce deep artifacts in their own vocabularies; the old org used the execution middle to translate in real time. AI can sit between domains as an async translation layer—replacing most operational meetings, if the underlying artifacts stay trustworthy.' published: true publishedDate: '2026-04-29' category: "AI" tags: ["ai", "software development", "teams", "meetings", "async", "organization", "the human inversion"] heroImage: "the-human-inversion-part-3-hero.png" heroImageSquare: "the-human-inversion-part-3-hero-square.png" ogImage: "the-human-inversion-part-3-hero-og.png" heroImageStyle: "keep-proportions" read_time: 11 series: "The Human Inversion" seriesSlug: "the-human-inversion" seriesPart: 3 seriesTotal: 5

The previous post argued that the hiring trigger has changed: teams should stay small until founder attention, not execution demand, becomes the bottleneck. This post is about what happens after that trigger fires — when you actually do need to add specialists, and the question becomes how they work together without reintroducing the coordination tax that the inversion was supposed to eliminate.

Two clarifications before the argument:

First, "specialist" here means deep, not narrow in the sense of a small, brittle slice of responsibility. Narrow is how little of the problem you touch; deep is how much judgment you bring to what you own.

A specialist PM still carries the full integrative breadth of product work — customer, data, business, market — but anchors it in foundation and review rather than in execution craft. A specialist designer is someone whose depth is in systems and constraints; a specialist engineer is someone whose depth is in architecture and technical judgment. The depth is real, but it's depth at the ends of the process, not depth in the old middle. The thing they're specialists at is the kind of work AI can't do.

Second, "scale" here means past the attention ceiling of a single founder-generalist, not late-stage scale. The shape I'm describing is what a five-to-twenty-person team looks like when it's organized around the inversion. Larger organizations introduce additional complications that aren't the focus here.

The old coordination model

When execution sat in the middle, specialists coordinated through handoffs. The PM wrote the spec; the designer read it and produced designs; the engineer built from the designs. Each handoff was a moment of explicit translation: the designer had to convert the PM's language into visual and interaction decisions, and the engineer had to convert the designer's decisions into technical ones. The translation was slow and lossy, but it had a property that's easy to undervalue in retrospect: it kept the specialists in real-time contact with each other's reality. The designer couldn't avoid understanding the PM's intent because they were holding the spec in their hands and making design decisions against it. The engineer couldn't avoid understanding the design because they were building from it screen by screen.

Meetings existed to smooth this process. Spec reviews existed because written specs were incomplete and the designer needed to ask questions. Design reviews existed because designs were ambiguous and the engineer needed to negotiate what was actually implementable. Standups existed because sequential dependencies meant everyone needed to know where everyone else was. Cross-functional syncs existed because decisions made in one discipline had implications for others that weren't always legible in the artifacts themselves.

None of this was pure waste. The meetings carried real information that the artifacts alone couldn't. But the meetings were expensive, and their expense was justified only because the underlying coupling — the handoffs, the sequential dependencies, the translation costs — was real and unavoidable.

Remove the middle, and the justification goes with it.

The new coupling problem

Here's what specialists at the ends actually do:

- A PM does deep market research, writes positioning documents, validates personas, and articulates the guarantees the product is committing to. The PM holds four knowledge domains in integrated tension: customer understanding, data fluency, business knowledge (go-to-market, stakeholders, economics, compliance), and competitive landscape. No other specialist carries all four simultaneously, which is what makes the PM's foundation work integrative rather than just deep.

- A designer authors and evolves a design system, establishes interaction constraints, and defines what "quality" means visually and experientially. The designer holds the cross-cutting decisions that determine whether a hundred future screens cohere or drift: spacing, typography, component behavior, accessibility standards, motion language, and the interaction patterns that make a product feel considered rather than assembled. When AI generates UI, the design system is what keeps the output coherent. Without it, every screen is a one-off.

- An engineer authors architectural standards, defines coding conventions, and establishes what classes of problems the system is structurally immune to. The engineer holds the decisions that determine whether the codebase compounds or rots: which abstractions are load-bearing, which invariants are enforced by the type system rather than by convention, where the boundaries between services sit, and what failure modes are structurally excluded rather than tested against. When AI writes code, the architectural standards are what keep it from introducing the kind of drift that takes months to surface and quarters to unwind.

These are foundational artifacts. They're durable. They govern thousands of downstream decisions. In the old model they were what got neglected because execution ate the calendar. In the new model they're the primary work.

But notice: none of these artifacts were traditionally written for each other. The PM's positioning document was written for the PM themselves, or for investors, or for marketing. The designer's system was written for designers. The architectural standards were written for engineers. Cross-disciplinary legibility was never a design requirement for these artifacts because the middle — the handoff zone — was where cross-disciplinary understanding got worked out in real time.

Take the middle away and the foundational artifacts have to do a job they weren't built for. They have to be legible across disciplines, because there's no longer a translation step where a human in the middle converts one discipline's work into another's.

This is the real coordination problem the inversion creates. Not "how do we replace meetings" — that's the surface symptom. The underlying problem is that the foundational artifacts each specialist produces need to become cross-readable, or the specialists will drift apart into parallel tracks that produce internally coherent work that doesn't fit together.

The naive solution is to have specialists write more legibly — PMs learning to write for engineers, engineers learning to write for PMs. This helps at the margin but doesn't scale. Every discipline has genuine depth that doesn't compress easily into the vocabulary of another discipline. A designer's reasoning about density and hierarchy can't be fully expressed in terms a PM will internalize without training; the same is true in every direction. Expecting everyone to become polymaths is a nice aspiration that doesn't produce reliable teams.

You can see the failure mode in miniature when a single operator crosses tool boundaries. Agents in one tool can't read the context accumulated in another; context carefully built in one harness goes stale the moment work shifts to a different one; rate limits force operators to hand off mid-task to a fresh tool with no memory of where things stood.

What looks like a tool-interoperability complaint is actually a cross-discipline coordination problem at individual scale — the translation substrate has a gap, and the work loses continuity across it. At team scale, the same gap between specialist-produced artifacts produces the same decay, just slower and more expensive to detect.

AI as coordination substrate

The actual solution is that AI sits between specialists as a translation layer. The engineer doesn't need to read the designer's system document fluently; they ask an AI what it implies for the component they're building. The PM doesn't need to internalize the architecture doc; they ask an AI whether their proposed feature is in tension with any architectural commitment. The designer doesn't need to parse the persona doc; they ask AI what it implies for the interaction patterns they're evolving.

This is different from AI doing the cross-disciplinary thinking. The specialists still own the judgment within their domains. But the translation between domains — the thing that used to require synchronous meetings so humans could explain their work to each other — is absorbed by a layer that doesn't require calendar alignment.

The mechanism has a failure mode worth naming. The translation layer depends on the AI's translation being faithful — on the summary it produces for the engineer of the PM's positioning doc actually reflecting what the doc says, and on the implications it surfaces for the designer actually following from the engineer's architectural commitments.

When the translation is clean, cross-disciplinary coherence emerges without meetings. When the translation silently drops a constraint — when the AI summarizes the architectural standards for the PM without mentioning the one commitment that rules out their proposed feature — the specialists stay in their depth but the work they produce against each other's artifacts is subtly misaligned. The misalignment compounds quietly, because nothing in the current artifact flow surfaces the omission.

The next post takes this up. The short version: translation alone isn't sufficient; you also need write-integrity on the underlying artifacts, so translation errors are auditable after the fact and the artifacts themselves can be trusted to be current rather than silently drifted.

This is why async parallel specialist work is possible now in a way it wasn't five years ago. The constraint was never that specialists couldn't work in parallel; it was that parallel work without real-time translation produced incoherence within weeks. AI as a translation substrate removes that constraint. Specialists can stay in their depth, produce foundational artifacts in their native vocabulary, and trust that other specialists will be able to act on the implications of those artifacts without requiring a meeting to unpack them.

The same mechanism runs on the review side. A PM reviewing a shipped feature doesn't need to read the engineer's implementation to understand it; they ask AI to surface whether the implementation actually delivers the positioning commitment the PM authored. A designer reviewing the same feature doesn't need to decode technical constraints; they ask AI whether the implementation respects the design system and flag specific drifts. An engineer reviewing the feature doesn't need a design walkthrough; they ask AI whether the implementation matches the design intent and where it compromised.

Three specialists, three reviews, running in parallel, no shared meeting, each producing findings in their native discipline while AI handles the translation between what they produced and what the others need to know.

This pattern is already running in the wild at solo-operator scale, which is useful preview evidence. The strongest version in practice looks like this: a single operator running three to four parallel AI agents, each working against a shared body of durable markdown — standards, skills, memory, process documents — that the operator continuously distills and refines as the work produces new reasoning. The agents don't coordinate with each other directly; they coordinate through the markdown. The operator isn't running agents in sequence and stitching outputs together. They're running them genuinely in parallel, each pulling what it needs from the shared substrate.

Every property the team-scale version needs is present in the solo-scale version: parallel work, no synchronous coordination between the working units, foundational artifacts that carry the coordination load, durable markdown as the translation layer. The solo operator is running a one-person version of the async parallel specialist architecture and getting compound output. The team-scale version generalizes this by replacing "agents running against my rubric" with "specialists plus agents running against a shared rubric." The same load-bearing mechanism scales up.

Beyond the trio

Everything above is written as if the team is PM plus designer plus engineer. That's the classical trio and it's useful for exposition, but it's also a simplification. Real software organizations include marketing, legal, compliance, operations, customer success, sales engineering, security, data, and support. These functions have historically coupled to product through a specific category of meeting: the alignment meeting. The launch review. The legal sign-off. The marketing enablement handoff. The ops readiness check. The security review before ship.

These meetings existed for the same reason spec reviews existed — cross-functional context wasn't legible across discipline boundaries, so humans translated in real time — but they had an additional pathology. The non-product disciplines weren't contributing to the artifact; they were gating it. Marketing got the product to package it for the world after product people had already decided what it was. Legal got the product to sign off on it after design and engineering had already committed to the shape. Ops got the product to make it deployable after architecture had already been chosen. These disciplines lived almost entirely at foundation (playbooks, policies, positioning) and review (launch readiness, compliance, deployability), but they were exiled to the gates rather than integrated at the ends. Their concerns landed in the last ten percent of a timeline, as blockers rather than as inputs.

The inversion doesn't just apply to them — it applies more consequentially to them than to the core trio. Their historical exclusion was deeper, so the amount of leverage recovered by integration is larger. A marketing specialist working async against the same foundational artifacts as the PM becomes a contributor to positioning rather than a downstream packager. A legal specialist who can query the rubric and the product decisions async can flag risk at foundation time rather than at gate time. An ops specialist can surface deployability constraints as architectural inputs rather than as readiness blockers discovered in week eleven.

Security is the sharpest case. Historically it lived as the ship-blocker of last resort — a gate at the end of the pipeline where findings arrived late and expensive, and where the security team's leverage was almost entirely negative (stopping bad things from shipping) rather than positive (shaping what got built).

In the inverted shape, security expertise at foundation time means the architectural standards encode invariants before a line of code is written, and the review layer has structured claims to check against rather than a diff to read cold. Teams that leave security at the gate in the AI-accelerated world will find themselves overwhelmed — not primarily by attackers, but by AI-generated vulnerability reports they can't triage at volume, because nothing attests provenance on either the incoming code or the incoming report.

Security as a foundation discipline is the difference between a review layer that scales with agent output and one that collapses under it.

The meeting question, directly

Does the inversion actually produce a drastic reduction in meetings, or is that just the latest version of a promise the industry has been making and breaking for years?

Meetings that go away structurally: Standups, handoff meetings, spec reviews, design reviews, cross-functional syncs, most status meetings, most "align on this" meetings, and most alignment meetings between product and the surrounding functions (e.g., launch reviews, legal sign-offs, marketing enablement handoffs, ops readiness checks).

These existed because of execution coupling and real-time translation needs. Both are now absorbed — execution into AI, translation into AI. The meetings don't go away because we all agreed to have fewer meetings. They go away because the thing they were doing isn't a thing anymore. This is probably seventy to ninety percent of current operational meeting volume for teams that actually restructure around the inversion.

Meetings that change character: Strategy meetings, architecture discussions, and quality calibration sessions. These existed for real-time generative thinking, not just coordination. They persist, but they happen less frequently, with more preparation, and with fewer people. A strategy meeting that used to happen weekly with eight people becomes a strategy meeting that happens monthly with three.

Meetings that stay irreducible: There are two: genuinely novel strategic decisions where there's no existing framework to reconcile against, and trust formation between humans who need to build mutual models of each other's judgment before they can work async. New hires require onboarding conversations. Pivots require real-time debate. Major quality calibration requires seeing each other respond to actual work in real time. These can't be AI-mediated even in principle, because what's being built in these meetings isn't coordination — it's the shared premises that all the async coordination runs on top of.

So the answer is: yes, a drastic reduction, specifically in the operational meeting layer where the bulk of current time actually goes. No, not to zero — because some meetings weren't wasteful in the first place; they were the place where premises got set and trust got built.

The diagnostic

Meeting volume is now a legible external signal of whether a team has actually restructured around AI or is using AI to accelerate a pre-AI organizational shape. Teams still running heavy operational meeting schedules in 2027 will not be teams that haven't adopted AI — every team will have adopted AI by then. They'll be teams that adopted AI as a productivity tool inside the old org structure rather than as a reason to rebuild the org structure.

A secondary diagnostic runs at the review layer. How much of a reviewer's time is spent verifying what the AI claims it did versus re-deriving what the AI should have done? Teams with the right infrastructure in place trend toward the first: a short verification of a structured claim, with the heavy lifting reserved for the surfaces the agent flagged as unverified. Teams without it stay stuck on the second: reading the diff cold because the agent's reasoning isn't captured anywhere queryable. The ratio moves slowly, but when it moves, it's a strong signal that the write-integrity layer that the next post describes is actually in place rather than aspirational.

The difference will first show up not in raw output — AI will roughly equalize output across teams — but in the quality of judgment, the coherence of the product, the depth of the strategic commitments. All of which are downstream of whether humans had the attention to do foundation and review properly, which is downstream of whether operational coordination was actually absorbed or just dressed up in AI tooling.

The next post addresses the unresolved piece: once specialists are working async in parallel, something still has to catch the tensions between their independent work before those tensions compound into product incoherence.